DeepSeek has released a new paper,Pilar Coll with co-founder Liang Wenfeng credited as a contributor, detailing how its latest large language model DeepSeek-V3 achieves efficient training and inference using only 2,048 H800 GPUs – significantly fewer than the tens of thousands typically required. The team attributes this efficiency to four key innovations: memory optimization through multi-head latent attention (MLA), computational savings via a Mixture-of-Experts (MoE) design with FP8 precision, communication improvements using a multi-plane network topology, and faster inference through multi-token prediction (MTP). With MLA, KV cache memory usage is cut to just 70KB per token, up to 1/7 that of competing models. MoE architecture activates only 37 billion of the model’s 671 billion parameters per forward pass, reducing training costs by 90% compared to dense models. FP8 training further halves compute and memory usage, with minimal accuracy tradeoff. Beyond the model, the paper also outlines five future directions for AI hardware design, advocating for tighter integration between software and hardware to address memory, compute, and networking bottlenecks. [36Kr, in Chinese]

(Editor: {typename type="name"/})

Sabalenka vs. Svitolina 2025 livestream: Watch Madrid Open for free

Sabalenka vs. Svitolina 2025 livestream: Watch Madrid Open for free

Polyamorous influencer breakups: What happens when hypervisible relationships end

Polyamorous influencer breakups: What happens when hypervisible relationships end

OpenAI gifts developers with enhanced voice and reasoning models

OpenAI gifts developers with enhanced voice and reasoning models

Best video game deal: Get Super Mario Bros. Wonder for $42.99 at Woot

Best video game deal: Get Super Mario Bros. Wonder for $42.99 at Woot

The Made in America iPhone: How much would it cost?

The Made in America iPhone: How much would it cost?

Bread and Circuses

...[Details]

Bread and Circuses

...[Details]

Shop Sonos speaker deals at Amazon — save up to 39%

SAVE UP TO 39%:Get Sonos speakers on sale ahead of the holidays. Get the Sonos Ray soundbar for just

...[Details]

SAVE UP TO 39%:Get Sonos speakers on sale ahead of the holidays. Get the Sonos Ray soundbar for just

...[Details]

Best AirPods deal: Save $10 on Apple AirPods 4

SAVE $10: As of Dec. 20, the Apple AirPods 4 are on sale at Amazon for $119. That's a saving of 8% o

...[Details]

SAVE $10: As of Dec. 20, the Apple AirPods 4 are on sale at Amazon for $119. That's a saving of 8% o

...[Details]

Arkadium mini crossword answers for December 17

The Daily Mini Crossword is one of the many popular daily word games available on Mashable. Powered

...[Details]

The Daily Mini Crossword is one of the many popular daily word games available on Mashable. Powered

...[Details]

Best earbuds deal: Save 20% on Soundcore Sport X20 by Anker

SAVE OVER $10:As of April 25, the Soundcore Sport X20 by Anker earbuds are on sale for $63.99 at Ama

...[Details]

SAVE OVER $10:As of April 25, the Soundcore Sport X20 by Anker earbuds are on sale for $63.99 at Ama

...[Details]

Best earbud deal: Get a pair of transparent Beats Studio Buds+ for just $129.99 at Amazon

SAVE $39.96:As of Dec. 17, get a pair of Beats Studio Buds+ for $129.99 at Amazon. That's a discount

...[Details]

SAVE $39.96:As of Dec. 17, get a pair of Beats Studio Buds+ for $129.99 at Amazon. That's a discount

...[Details]

Can you ever cut all ties after a breakup in the digital age?

The digital age was built on connection. We started by rekindling old connections, like how the now-

...[Details]

The digital age was built on connection. We started by rekindling old connections, like how the now-

...[Details]

Best video game deal: Get Super Mario Bros. Wonder for $42.99 at Woot

SAVE $17: As of Dec. 17, get Super Mario Bros. Wonderfor Nintendo Switch for just $42.99 at Woot, do

...[Details]

SAVE $17: As of Dec. 17, get Super Mario Bros. Wonderfor Nintendo Switch for just $42.99 at Woot, do

...[Details]

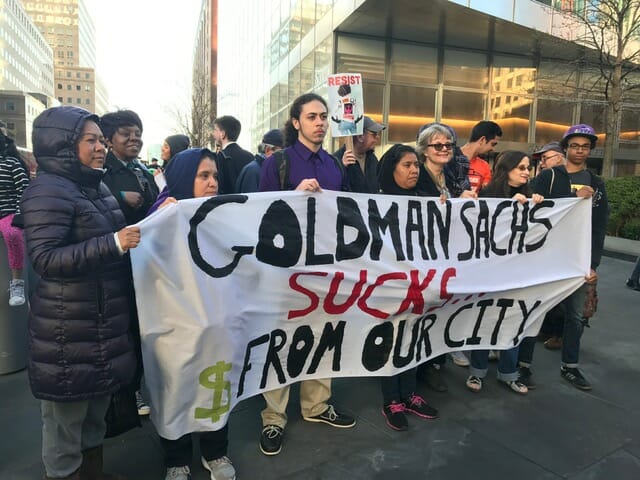

A Typical Wall Street Republican

Interviews for Resistance

...[Details]

Interviews for Resistance

...[Details]

Hydro Flask deals: Up to 50% off on water bottles and tumblers

Dec. 19th, Hydro Flask water bottles are on sale for up to 50% off from retailers like Amazon, REI,

...[Details]

Dec. 19th, Hydro Flask water bottles are on sale for up to 50% off from retailers like Amazon, REI,

...[Details]

I'm a college professor. My advice to young people who feel hooked on tech

Today's Hurdle hints and answers for December 18

接受PR>=1、BR>=1,流量相当,内容相关类链接。