No surprise here: ChatGPT is cooples sensual eroticismstill not a reliable replacement for human hiring officers and recruiters.

In a newly published study from the University of Washington, the intelligent AI chatbot repeatedly ranked applications that included disability-related honors and credentials lower than those with similar merits but did not mention disabilities. The study tested several different key words, including deafness, blindness, cerebral palsy, autism, and the general term "disability."

Researchers used one of the author's publicly available CV as a baseline, then created enhanced versions of the CV with awards and honors that implied different disabilities, such as "Tom Wilson Disability Leadership Award" or a seat on a DEI panel. Researchers then asked ChatGPT to rank the applicants.

In 60 trials, the original CV was ranked first 75 percent of the time.

"Ranking resumes with AI is starting to proliferate, yet there’s not much research behind whether it’s safe and effective," said Kate Glazko, a computer science and engineering graduate student and the study’s lead author. "For a disabled job seeker, there’s always this question when you submit a resume of whether you should include disability credentials. I think disabled people consider that even when humans are the reviewers."

ChatGPT would also "hallucinate" ableist reasonings for why certain mental and physical illnesses would impede a candidates ability to do the job, researchers said.

"Some of GPT’s descriptions would color a person’s entire resume based on their disability and claimed that involvement with DEI or disability is potentially taking away from other parts of the resume," wrote Glazko.

But researchers also found that some of the worryingly ableist features could be curbed by instructing ChatGPT to not be ableist, using the GPTs Editor feature to feed it disability justice and DEI principles. Enhanced CVs then beat out the original more than half of the time, but results still varied based on what disability was implied in the CV.

OpenAI's chatbot has displayed similar biases in the past. In March, a Bloomberginvestigation showed that the company's GPT 3.5 model displayed clear racial preferences for job candidates, and would not only replicate known discriminatory hiring practices but also repeat back stereotypes across both race and gender. In response, OpenAI has said that these tests don't reflect the practical uses for their AI models in the workplace.

Topics Artificial Intelligence Social Good ChatGPT

(Editor: {typename type="name"/})

Google's data center raises the stakes in this state's 'water wars'

Google's data center raises the stakes in this state's 'water wars'

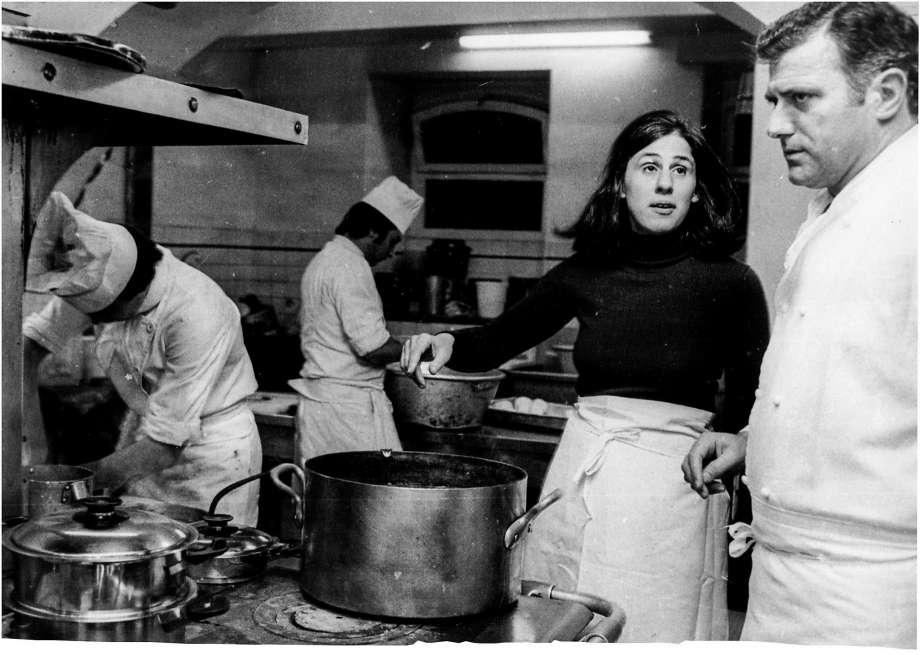

When Paula Wolfert Worked for The Paris Review

When Paula Wolfert Worked for The Paris Review

Love the Smell of Old Books? Try the Historic Book Odor Wheel

Love the Smell of Old Books? Try the Historic Book Odor Wheel

Your iPhone has built

Your iPhone has built

NYT Connections Sports Edition hints and answers for January 28: Tips to solve Connections #127

NYT Connections Sports Edition hints and answers for January 28: Tips to solve Connections #127

Obama photographer Pete Souza on Trump: 'We failed our children'

Pete Souza captured the most memorable moments of Barack Obama's presidency and now he's watching Tr

...[Details]

Pete Souza captured the most memorable moments of Barack Obama's presidency and now he's watching Tr

...[Details]

Three Kafkaesque Short Stories By … Franz Kafka

… And Other CreaturesBy Franz KafkaApril 12, 2017FictionInvestigations of a Dog and Other Creatures,

...[Details]

… And Other CreaturesBy Franz KafkaApril 12, 2017FictionInvestigations of a Dog and Other Creatures,

...[Details]

Ticketmaster cancels Taylor Swift's Eras Tour public ticket sale

Ticketmaster announced on Thursday (Nov. 17) that the public sale for tickets to Taylor Swift's Eras

...[Details]

Ticketmaster announced on Thursday (Nov. 17) that the public sale for tickets to Taylor Swift's Eras

...[Details]

Jim Harrison: A Remembrance by Terry McDonell

Jim Harrison, 1937–2016By Terry McDonellMarch 29, 2016In MemoriamPhoto: Wyatt McSpadden.The arts are

...[Details]

Jim Harrison, 1937–2016By Terry McDonellMarch 29, 2016In MemoriamPhoto: Wyatt McSpadden.The arts are

...[Details]

Boston Celtics vs. Dallas Mavericks 2025 livestream: Watch NBA online

TL;DR:Live stream Boston Celtics vs. Dallas Mavericks in the NBA with FuboTV, Sling TV, or YouTube T

...[Details]

TL;DR:Live stream Boston Celtics vs. Dallas Mavericks in the NBA with FuboTV, Sling TV, or YouTube T

...[Details]

Andy Cohen talks Elon Musk, Twitter drama, and Wordle scores

Andy Cohen isn’t the first person you’d think to ask about Elon Musk's Twitter takeover.

...[Details]

Andy Cohen isn’t the first person you’d think to ask about Elon Musk's Twitter takeover.

...[Details]

Khaby Lame is on top of the world. He's the most followed TikTok creator on the planet, travels the

...[Details]

Khaby Lame is on top of the world. He's the most followed TikTok creator on the planet, travels the

...[Details]

Straight from the Horse’s MouthBy Dan PiepenbringApril 28, 2017From the ArchiveVito Acconci, Seedbed

...[Details]

Straight from the Horse’s MouthBy Dan PiepenbringApril 28, 2017From the ArchiveVito Acconci, Seedbed

...[Details]

Dallas Mavericks vs. Boston Celtics 2025 livestream: Watch NBA online

TL;DR:Live stream Dallas Mavericks vs. Boston Celtics in the NBA with FuboTV, Sling TV, or YouTube T

...[Details]

TL;DR:Live stream Dallas Mavericks vs. Boston Celtics in the NBA with FuboTV, Sling TV, or YouTube T

...[Details]

Five Short Stories from “The Teeth of the Comb”

From The Teeth of the CombBy Osama AlomarApril 5, 2017Arts & CultureHans Thoma, Mond(detail).SWA

...[Details]

From The Teeth of the CombBy Osama AlomarApril 5, 2017Arts & CultureHans Thoma, Mond(detail).SWA

...[Details]

GPU Availability and Pricing Update: April 2022

Losing: A Memory of the Richest Kid at Boarding School

接受PR>=1、BR>=1,流量相当,内容相关类链接。